Over the Hedge: The Most Underrated Animated Movie You’ve Probably Forgotten About

Over the Hedge 2 had such potential, too.

The 26 Best Revenge Movies of All Time, Ranked By IMDB

With so many great revenge movies out there, Nerd Much? takes a look at the 26 best of all-time, including Darkman, The Crow, John Wick, and more.

Modern Zombie Movies Are More Emotional Than Their Predecessors

The zombie film genre typically gets a bad wrap from the outsiders who don't consume those types of films, and the general consensus is...

Every New & Upcoming Horror Movie of 2024 & 2025

The 50 best upcoming horror movies of 2023 and beyond.

Camp Nowhere Was A Super Underrated 90s Gem

Camp Nowhere is a 1994 comedy film directed by Jonathan Prince and written by Andrew Kurtzman and Eliot Wald. The movie follows a group...

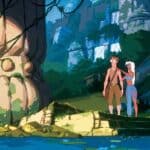

Why Disney’s ‘Treasure Planet’ Flopped Miserably

Disney's follow-up to Atlantis didn't quite land with audiences.

Why Disney’s Atlantis Was An Underappreciated Gem

The city of Atlantis was the perfect setting for a Disney film.

Popular Now

The Stormlight Archive Reading Order: Your Ultimate Guide

Love a Song of Ice and Fire and want something to satisfy your appetite for a similar world? Look no further than Brandon Sanderson's The Stormlight Archive.